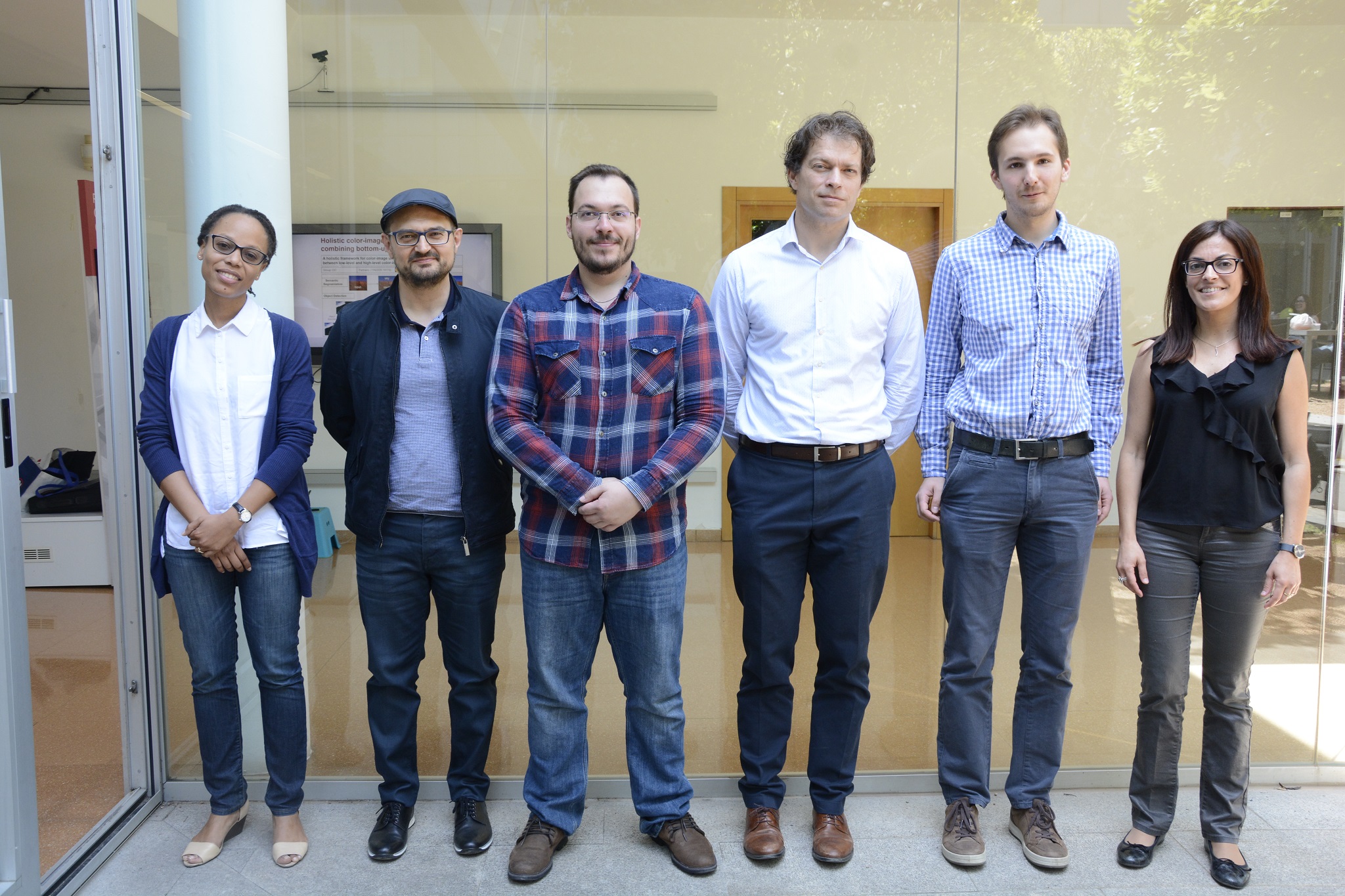

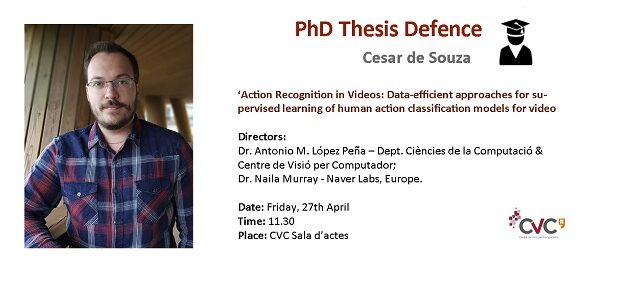

CVC has a new PhD on its record!

Abstract:

In this dissertation, we explore different ways to perform human action recognition in video clips. We focus on data efficiency, proposing new approaches that alleviate the need for laborious and time-consuming manual data annotation. In the first part of this dissertation, we start by analyzing previous state-of-the-art models, comparing their differences and similarities in order to pinpoint where their real strengths comes from. Leveraging this information, we then proceed to boost the classification accuracy of shallow models to levels that rival deep neural networks. We introduce hybrid video classification architectures based on carefully designed unsupervised representations of handcrafted spatiotemporal features classified by supervised deep networks. We show in our experiments that our hybrid model combine the best of both worlds: it is data efficient (trained on 150 to 10,000 short clips) and yet improved significantly on the state of the art, including deep models trained on millions of manually labeled images and videos. In the second part of this research, we investigate the generation of synthetic training data for action recognition, as it has recently shown promising results for a variety of other computer vision tasks. We propose an interpretable parametric generative model of human action videos that relies on procedural generation and other computer graphics techniques of modern game engines. We generate a diverse, realistic, and physically plausible dataset of human action videos, called PHAV for "Procedural Human Action Videos". It contains a total of 39,982 videos, with more than 1,000 examples for each action of 35 categories. Our approach is not limited to existing motion capture sequences, and we procedurally define 14 synthetic actions. We then introduce deep multi-task representation learning architectures to mix synthetic and real videos, even if the action categories differ. Our experiments on the UCF-101 and HMDB-51 benchmarks suggest that combining our large set of synthetic videos with small real-world datasets can boost recognition performance, outperforming fine-tuning state-of-the-art unsupervised generative models of videos.

Keywords: computer vision, action recognition, machine learning, applied mathematics.