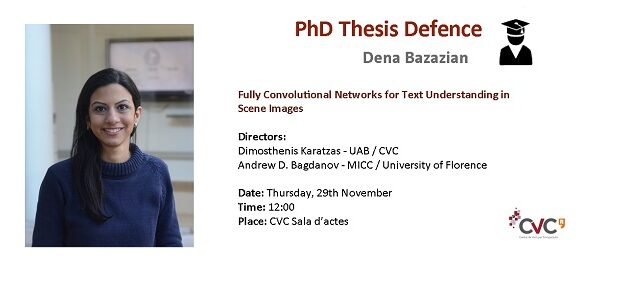

CVC has a new PhD on its record!

Abstract:

Text understanding in scene images has gained plenty of attention in the computer vision community and it is an important task in many applications as text carries semantically rich information about scene content and context. For instance, reading text in the scene can be applied to autonomous driving, scene understanding or assisting visually impaired people. The general aim of scene text understanding is to localize and recognize the text in scene images. Text regions are first localized in the original image by a trained detector model and afterwards fed into a recognition module. The tasks of localization and recognition are highly correlated since an inaccurate localization can affect the recognition task.

The main purpose of this thesis is to devise efficient methods for scene text understanding. We investigate how the latest results on deep learning can advance text understanding pipelines. Recently, Fully Convolutional Networks (FCNs) and derived methods have achieved a significant performance on semantic segmentation and pixel-level classification tasks. Therefore, we took benefit of the strengths of FCN approaches in order to detect text in natural scenes.

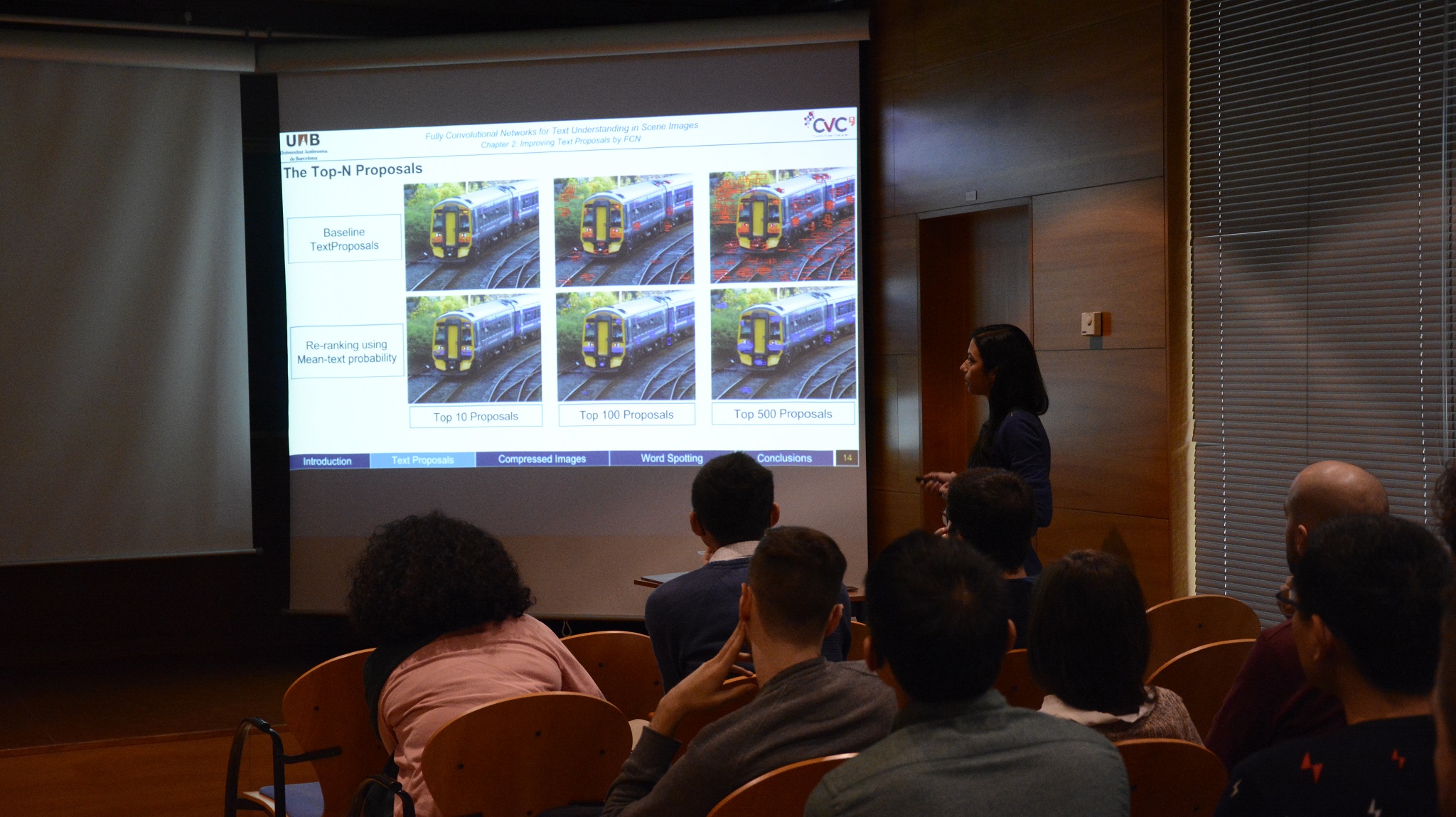

In this thesis, we have focused on two challenging tasks of scene text understanding which are Text Detection and Word Spotting. For the task of text detection, we have proposed an efficient text proposal technique in scene images. We have considered the Text Proposals method as the baseline which is an approach to reduce the search space of possible text regions in an image. In order to improve the Text Proposals method we combined it with Fully Convolutional Networks to efficiently reduce the number of proposals while maintaining the same level of accuracy and thus gaining a significant speed up. Our experiments demonstrate that this text proposal approach yields significantly higher recall rates than the line-based text localization techniques, while also producing better-quality localizations. We have also applied this technique on compressed images such as videos from wearable egocentric cameras.

For the task of word spotting, we have introduced a novel mid-level word representation method. We have proposed a technique to create and exploit an intermediate representation of images based on text attributes which roughly correspond to character probability maps. Our representation extends the concept of Pyramidal Histogram Of Characters (PHOC) by exploiting Fully Convolutional Networks to derive a pixel-wise mapping of the character distribution within candidate word regions. We call this representation the Soft-PHOC. Furthermore, we show how to use Soft-PHOC descriptors for word spotting tasks through an efficient text line proposal algorithm.

To evaluate the detected text, we propose a novel line based evaluation along with the classic bounding box based approach. We test our method on the incidental scene text images which comprises real-life scenarios such as urban scenes. The importance of incidental scene text images is due to the complexity of backgrounds, perspective, variety of script and language, short text and little linguistic context which all of these factors together makes the incidental scene text images challenging.

Keywords: text understanding, text detection, word spotting, fully convolutional network (FCN), scene images.