CVC has a new PhD on its record!

Arnau Baró successfully defended his dissertation on Computer Science on November 14, 2022, and he is now Doctor of Philosophy by the Universitat Autònoma de Barcelona.

What is the thesis about?

The transcription of sheet music into some machine-readable format can be carried out manually. However, the complexity of music notation inevitably leads to burdensome software for music score editing, which makes the whole process very time-consuming and prone to errors. Consequently, automatic transcription systems for musical documents represent interesting tools.

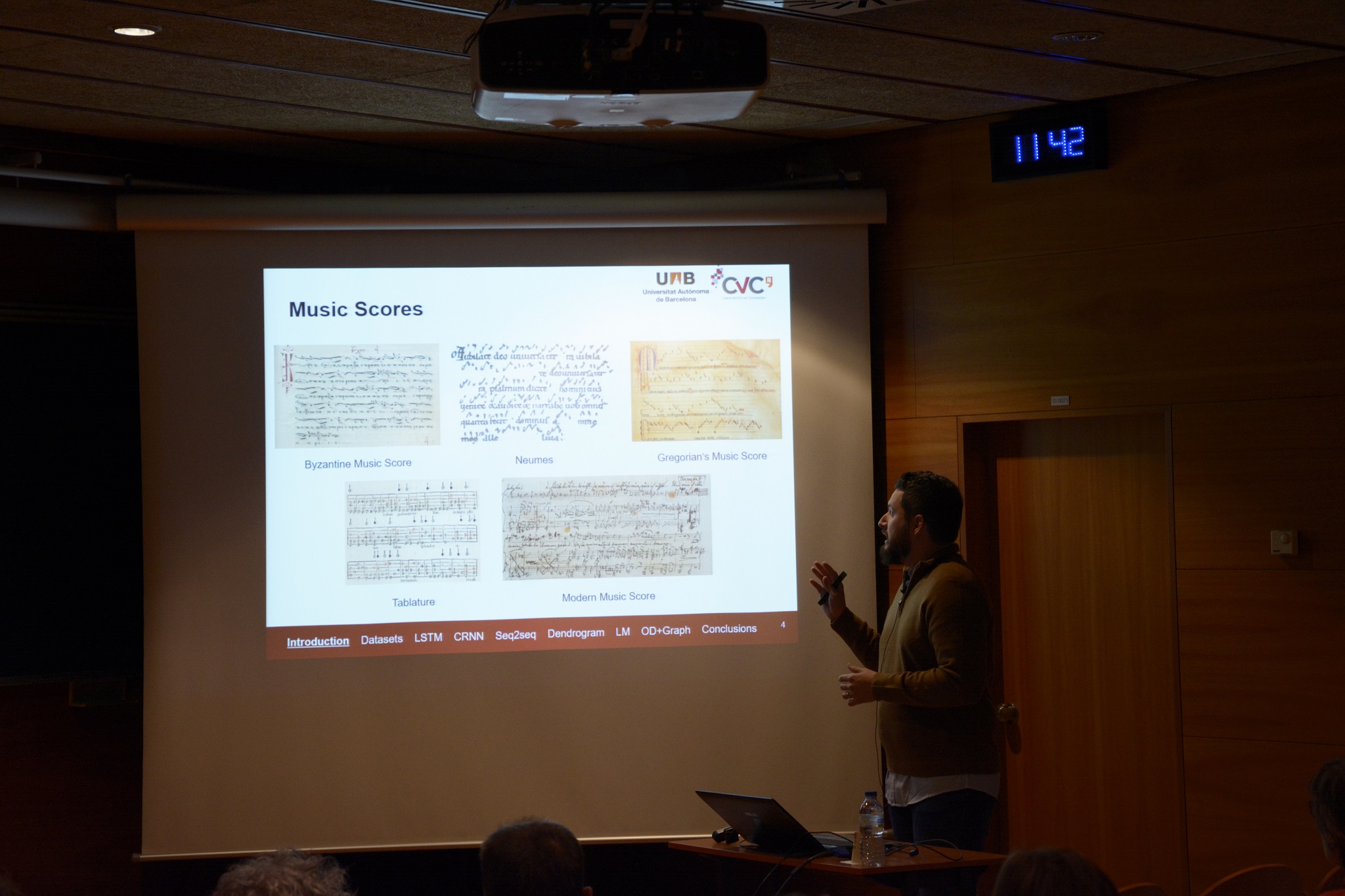

Document analysis is the subject that deals with the extraction and processing of documents through image and pattern recognition. It is a branch of computer vision. Taking music scores as source, the field devoted to address this task is known as Optical Music Recognition (OMR). Typically, an OMR system takes an image of a music score and automatically extracts its content into some symbolic structure such as MEI or MusicXML.

In this dissertation, we have investigated different methods for recognizing a single staff section (e.g. scores for violin, flute, etc.), much in the same way as most text recognition research focuses on recognizing words appearing in a given line image. These methods are based in two different methodologies. On the one hand, we present two methods based on Recurrent Neural Networks, in particular, the Long Short-Term Memory Neural Network. On the other hand, a method based on Sequence to Sequence models is detailed.

Music context is needed to improve the OMR results, just like language models and dictionaries help in handwriting recognition. For example, syntactical rules and grammars could be easily defined to cope with the ambiguities in the rhythm. In music theory, for example, the time signature defines the amount of beats per bar unit. Thus, in the second part of this dissertation, different methodologies have been investigated to improve the OMR recognition. We have explored three different methods: (a) a graphic tree-structure representation, Dendrograms, that joins, at each level, its primitives following a set of rules, (b) the incorporation of Language Models to model the probability of a sequence of tokens, and (c) graph neural networks to analyze the music scores to avoid meaningless relationships between music primitives.

Finally, to train all these methodologies, and given the method-specificity of the datasets in the literature, we have created four different music datasets. Two of them are synthetic with a modern or old handwritten appearance, whereas the other two are real handwritten scores, being one of them modern and the other old.